Your new post is loading...

Your new post is loading...

|

Scooped by

Complexity Digest

May 3, 10:26 AM

|

The universe creatively sets the rules for its own becoming. Stuart Kauffman is a theoretical biologist and leading complexity scientist who has argued that the self-organization of organisms is as influential in evolution as natural selection. His seminal book on the subject is “The Origins of Order: Self-Organization and Natural Selection in Evolution” (1993). He spoke recently with Noema Editor-in-Chief Nathan Gardels. Read the full article at: www.noemamag.com

|

Scooped by

Complexity Digest

May 1, 3:34 PM

|

Reda Benkirane Complex Systems, 34(4), 2026 pp. 387–400. Complexity, a term that is both ambiguous and multifaceted, is used widely today. Various legitimate definitions can be proposed for it, as is the case with “ample” notions such as intelligence, consciousness or culture. The recurrent mention of this term can be attributed to the transformation of our societies and their artifacts, as well as the acceleration of time brought by the digital revolution—a technological upheaval comparable to the invention of writing and the printing press. Read the full article at: www.complex-systems.com

|

Scooped by

Complexity Digest

April 30, 1:46 PM

|

Lluís Danús, William Dinneen, Carolina Torreblanca, Guy Grossman, and Sandra González-Bailón PNAS 123 (18) e2511050123 The term “invisible college” refers to communication networks that help scientists exchange information and advance knowledge. These networks create social capital, granting access to resources like new ideas and support. Measuring those intangible exchanges is an empirical challenge. Here we approximate these ties through the analysis of the “thank you” notes appended to journal articles. Our findings show that scholars disconnected from this layer of academic social capital have lower publication impact. We also show that informal ties provide support not captured by coauthorship ties, which reflect a more rigid form of collaboration. Documenting how informal structures of support operate can help leverage collective resources in the pursuit of shared intellectual goals. Read the full article at: www.pnas.org

|

Scooped by

Complexity Digest

April 29, 1:42 PM

|

Luis A. Nunes Amaral; Arthur Capozzi; Dirk Helbing

R Soc Open Sci. (2026) 13 (4): 251727 . Organizations learn from market, political and societal responses to their actions. While in some cases both the actions and responses take place in an open manner, in many others, some aspects may be hidden from external observers. The Eurovision Song Contest offers a mostly open-data case in which to study organizational level learning at the levels of organizers and participants. We present here evidence for changes in the rules of the Contest in response to undesired outcomes such as runaway winners. We also find strong evidence of participant learning in the characteristics of competing songs over the 70 years of the Contest. English has been adopted as the lingua franca of the competing songs and pop has become the standard genre. The number of words of lyrics has also grown in response to this collective learning. Remarkably, we find evidence that France, Italy, Portugal and Spain have chosen to ignore the ‘lesson’ that English lyrics increase winning probability, consistent with utility functions that award greater value to featuring national culture than to winning the Contest. These countries—but not Germany—appear to be less susceptible to Anglo-Saxon cultural influence than their peers, a resistance that may extend beyond cultural matters. Read the full article at: royalsocietypublishing.org

|

Scooped by

Complexity Digest

April 11, 11:12 AM

|

JÉRÔME M. W. GIPPET, COLIN J. CARLSON, TRISTAN KLAFTENBERGER, MATTÉO SCHWEIZER, EVAN A. ESKEW, MEREDITH L. GORE, AND CLEO BERTELSMEIER SCIENCE 9 Apr 2026 Vol 392, Issue 6794 pp. 178-182 The wildlife trade affects a quarter of terrestrial vertebrates and creates opportunities for cross-species pathogen transmission, but its precise role in shaping animal-human pathogen exchange remains unclear. In our analysis of 40 years of global wildlife trade data, we show that traded mammals are 1.5-fold as likely to share pathogens with humans as nontraded mammals, and that illegal and live-animal trade further exacerbate pathogen sharing. Time spent in trade predicts the number of zoonotic pathogens that a wildlife species hosts. On average, a species shares an additional pathogen with humans for every 10 years it is traded. Read the full article at: www.science.org

|

Scooped by

Complexity Digest

April 7, 12:11 PM

|

Kishore Vasan, Márton Karsai & Albert-László Barabási

Scientific Reports The metaverse is a virtual space enabling interactions beyond geographical boundaries, promising to transform how people engage with each other both in the digital and the physical worlds. The lack of geographical boundaries and travel costs in the metaverse prompts us to ask if the fundamental laws that govern human mobility in the physical world apply. We collected data on avatar movements from Decentraland, along with their network mobility extracted from NFT purchases on Ethereum and Polygon. We find that despite the absence of mobility costs, an individual’s inclination to visit new locations diminishes over time, limiting movement to a small fraction of the metaverse. We also find a lack of correlation between land prices and visitation, a deviation from the patterns characterizing the physical world. Finally, we identify the scaling laws that characterize meta mobility and show that we need to add preferential selection to the existing models to explain quantitative patterns of metaverse mobility. Our ability to predict the characteristics of the emerging meta mobility network implies that the laws governing human mobility are rooted in fundamental patterns of human dynamics, rather than the nature of space and cost of movement. Read the full article at: www.nature.com

|

Scooped by

Complexity Digest

April 3, 6:47 PM

|

Danielle L. Chase, Daniel Zhu, Mahi Kathait, Henry Robertson, Jash Shah, Sully Harrer, Gary Nave, Nolan R. Bonnie, Orit Peleg When honeybee colonies reproduce by fission, several thousand bees and their queen depart the parental nest and temporarily form a dense cluster on a tree branch or other surface while searching for a new nest site. Once the new nest site is selected, the swarm disassembles and flies toward it. How honeybees transition rapidly between dispersed flight and an aggregated cluster remains an open question. Here, we develop an experimental system and three-dimensional imaging pipeline to track individual flying bees together with the evolving morphology of the swarm during formation and dissolution. We report results from a representative swarming event. During assembly, swarms rapidly form low-density clusters before undergoing a slower contraction to a more dense steady state configuration. In contrast, disassembly occurs significantly faster than assembly and is characterized by strongly divergent flight, with bees departing the swarm in all directions. Overall, this method is able to demonstrate the coupled flight and morphological dynamics that underlie honeybee swarm assembly. Because the system is relatively low-cost and low-power, it is readily adaptable for three-dimensional imaging of other biological collectives in naturalistic environments. Read the full article at: www.biorxiv.org

|

Scooped by

Complexity Digest

April 2, 4:54 PM

|

Tenta Tani

We theoretically investigate how information flows when two particles interact with each other. Understanding the physical mechanisms of directional information flow is crucial for advancing information thermodynamics and stochastic computing. However, the fundamental connection between mechanical motion and causal information transfer remains elusive. To focus only on essential effects of physical dynamics, we examine two interacting Brownian particles confined in a one-dimensional potential. By simulating their Langevin dynamics, we quantify the causal information exchange using transfer entropy. We demonstrate that a mass asymmetry inherently breaks the symmetry of information flow, inducing a net directional transfer from the heavier to the lighter particle. Physically, the heavier particle, possessing larger inertia and higher active information storage, retains the memory of its trajectory longer against thermal fluctuations, thereby acting as a source of information. We analytically clarify that this net transfer is governed by a competition between the difference in memory capacity and the predictability of the particle trajectories. Furthermore, we reveal that the net information flow scales logarithmically with the mass ratio. These findings provide essential insights into the physical significance of transfer entropy and the nature of information flow in general physical systems. Read the full article at: arxiv.org

|

Scooped by

Complexity Digest

March 25, 9:09 AM

|

Jonas Wickman, Christopher A. Klausmeier, and Elena Litchman The American Naturalist Environmental variability, in the form of either temporal fluctuations or intermittent perturbations, affects virtually all ecological systems. However, while temporal variability is widely recognized to play an important role across many ecological and evolutionary subdisciplines, there is no high-level cross-cutting concept that describes how species, communities, and ecosystems respond to variability. In this article we propose that “antifragility” could serve well as such a concept. Initially used in economics, antifragility denotes that a property or metric of performance increases with variability. To showcase the breadth of applicability and utility of the concept, we examine two mathematical models for antifragility in ecosystem services and competition. We also demonstrate some of the nuances and possible misapplications of the concept. Under global change, the variability of environmental conditions is expected to change. We believe that antifragility could serve as a useful concept in coordinating research efforts toward understanding the effects of these changes. Read the full article at: www.journals.uchicago.edu

|

Scooped by

Complexity Digest

March 15, 2:35 PM

|

Dashun Wang As artificial-intelligence systems take on more of the scientific workflow, the central goal should not be complete automation, but designing platforms that preserve creativity, responsibility and surprise. Read the full article at: www.nature.com

|

Scooped by

Complexity Digest

March 12, 2:37 PM

|

Lucas Lacasa

In network science, collective dynamics of complex systems are typically modelled as (nonlinear, often including many-body) vertex-level update rules evolving over a graph interaction structure. In recent years, frameworks that explicitly model such higher-order interactions in the interaction backbone (i.e. hypergraphs) have been advanced, somehow shifting the imputation of the effective nonlinearity from the dynamics to the interaction structure. In this work we discuss such structural--dynamical representation duality, and investigate how and when a nonlinear dynamics defined on the vertex set of a graph allows an equivalent representation in terms of a linear dynamics defined on the state space of a sufficiently richer, higher-order interaction structure. Using Carleman linearisation arguments, we show that finite polynomial dynamics defined in the |V| vertices of a graph admit an exact representation as linear dynamics on the state space of an hb-graph of order |V|, a combinatorial structure that extends hypergraphs by allowing vertex multiplicity, where the specific shape of the nonlinearity indicates whether the hb-graph is either finite or infinite (in terms of the number of hb-edges). For more general analytic nonlinearities, exact linear representation always require an hb-graph of infinite size, and its finite-size truncation provides an approximate representation of the original nonlinear graph-based dynamics. Read the full article at: arxiv.org

|

Scooped by

Complexity Digest

March 11, 3:14 PM

|

ONERVA KORHONEN Advances in Complex Systems Vol. 28, No. 08, 2530001 (2025) Network analysis has become a powerful tool in various fields. However, the increasing popularity comes with potential problems. Unfamiliarity with the characteristics of the systems under investigation complicates network model construction and interpretation of analysis outcomes. While these issues require special attention in studies that apply the increasingly complex higher-order connectivity models, similar problems are associated with all, even the most simple, network models. Alongside technical issues, network scientists face a philosophical question: can the network approach discover the fundamental nature of a system, on the one hand, and produce useful information, on the other hand. In this perspective, I review the potential problems of the network approach and propose two solutions to address them: active evaluation of the potential and limitations of the network framework before applying a network model and a transition toward an interdisciplinary research practice to interpret analysis outcomes in their right context. Read the full article at: www.worldscientific.com

|

Scooped by

Complexity Digest

March 10, 6:28 PM

|

How and why do complex chemical and biological systems self-organize into ordered states far from thermodynamic equilibrium? Despite advances in thermodynamics, kinetics, and information theory, a unifying principle that links organization and efficiency across scales has remained elusive. In open systems, productive-event trajectories are conditioned on starting at a source and ending at a sink. This work proposes a stochastic–dissipative least-action triad framework in which (i) a path-ensemble weighting biases trajectories by their action cost, (ii) feedback processes sharpen this distribution, and (iii) the ensemble evolves toward a least-average-action attractor, decreasing during self-organization and increasing during decay. A parametric cross-scale metric—Average Action Efficiency (AAE)—is defined, which is inversely proportional to the average action per productive event. Under reinforcing feedback, identities derived from the exponential-family path measure show that the average action decreases and AAE rises monotonically. In future extensions, this formulation could help bridge quantum, classical, and biological regimes while remaining computationally tractable, because its empirical version relies on aggregate energetic and timing data rather than enumerating individual trajectories. AAE reaches a local maximum at a non-equilibrium steady state under fixed operational context, consistent with the present formulation, and connections to thermodynamic and informational measures are made. A companion article (Part II) details empirical estimation strategies and applications (Georgiev, 2025a). Georgi Yordanov Georgiev BioSystems Volume 262, April 2026, 105647 Read the full article at: www.sciencedirect.com

See Also: Part II: Empirical estimation, Average Action Efficiency, and applications to ATP synthase

|

|

Scooped by

Complexity Digest

May 2, 10:32 AM

|

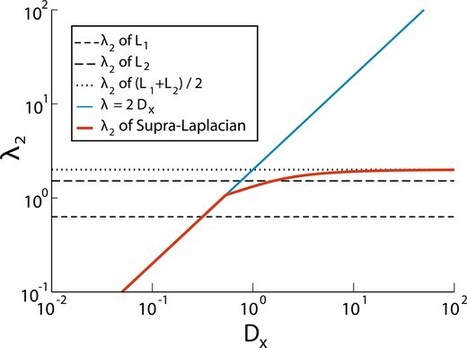

Alberto Aleta, Andreia Sofia Teixeira, Guilherme Ferraz de Arruda, Andrea Baronchelli, Alain Barrat, János Kertész, Albert Díaz-Guilera, Oriol Artime, Michele Starnini, Giovanni Petri, Márton Karsai, Siddharth Patwardhan, Kathryn Coronges, Ann McCranie, Alessandro Vespignani, Yamir Moreno, Santo Fortunato Journal of Complex Networks, Volume 14, Issue 2, April 2026, cnag007, Multilayer network science has emerged as a central framework for analysing interconnected and interdependent complex systems. Its relevance has grown substantially with the increasing availability of rich, heterogeneous data, which makes it possible to uncover and exploit the inherently multilayered organisation of many real-world networks. In this review, we summarise recent developments in the field. On the theoretical and methodological front, we outline core concepts and survey advances in community detection, dynamical processes, temporal networks, higher-order interactions, and machine-learning-based approaches. On the application side, we discuss progress across diverse domains, including interdependent infrastructures, spreading dynamics, computational social science, economic and financial systems, ecological and climate networks, science-of-science studies, network medicine, and network neuroscience. We conclude with a forward-looking perspective, emphasizing the need for standardised datasets and software, deeper integration of temporal and higher-order structures, and a transition toward genuinely predictive models of complex systems. Read the full article at: academic.oup.com

|

Scooped by

Complexity Digest

May 1, 10:31 AM

|

Adam B. Barrett, Borjan Milinkovic, Pedro A. M. Mediano, Fernando E. Rosas, Daniel Bor, Lionel Barnett, Anil K. Seth The integrated information theory of consciousness (IIT) is uniquely ambitious in proposing a mathematical formula, derived from apparently fundamental properties of conscious experience, to describe the quantity and quality of consciousness for any physical system that possesses it. IIT has generated considerable debate, which has engendered some misunderstandings and misrepresentations. Here we address and hope to remedy this. We begin by concisely summarising the essentials of IIT. Given IIT is supposed to apply universally, we do this with reference to an arbitrary patch of matter, as opposed to the usual system of discrete computational units. Then, after briefly summarising IIT's theoretical and empirical achievements, we focus on five points which we consider especially important for driving forward new theory and increasing understanding. First, a high value of the measure Φ is not synonymous with `more consciousness'. We describe how Φ might be replaced with a suite of quantities to obtain a multi-dimensional characterisation of states of consciousness. Second, we describe with nuance the distinct flavour of panpsychism implied by IIT -- whereby space (and time) are tiled with substrates of (proto-) consciousness -- and find this is not problematic for the theory. Third, Φ is not well-defined for real physical systems, and has not been computed on any real physical system. Fourth, so far only proxies for IIT measures have been computed, and not approximations. Fifth, for IIT to fit with current successful theories in fundamental physics, a reformulation in terms of continuous fields would be needed. Read the full article at: arxiv.org

|

Scooped by

Complexity Digest

April 29, 2:35 PM

|

Yasuhiro Hashimoto, Hiroki Sato, and Takashi Ikegami Entropy 2026, 28(4), 398

Social media platforms offer unprecedented opportunities to study cultural evolution by analyzing digital traces. This study presents a methodological framework for analyzing the temporal dynamics of cultural modules in hashtag co-occurrence networks. We address the inherent challenges of analyzing dense, skewed, and highly variable cultural networks by introducing a perturbation ensemble clustering approach that distinguishes stable from unstable structural elements. By applying the Leiden algorithm to a perturbed ensemble of hashtag networks, we identify robust core modules and their stable periphery, and distinguish them from floating elements with unstable associations. Analysis of four years of data from a major photo-sharing platform reveals complex patterns in the evolution of cultural modules, including both stable associations and dynamic reorganizations. Our findings demonstrate how ensemble clustering techniques can effectively capture the interplay between stability and change in evolving cultural systems.

Read the full article at: www.mdpi.com

|

Scooped by

Complexity Digest

April 29, 11:04 AM

|

Tetsushi Ohdaira Chaos, Solitons & Fractals

Volume 208, Part 4, July 2026, 118382 This study modifies the model in the previous studies and considers three types of inter-individual relationships: regular, random, and scale-free ring lattices. Furthermore, we introduce defectors, who do not contribute to the public goods; cooperators, who contribute to the public goods; and loners, who do not participate in the public goods framework. We assume that each of these three types of individuals punishes other individuals with a probability proportional to the difference between their own payoff and their opponent's average payoff including them. Using this modified pool punishment model, this study shows the following. Firstly, the damage to the average payoff due to excessive punishment is kept significantly low. Secondly, antisocial punishment is not evolutionarily advantageous, and cooperators always become advantageous. Finally, the final average payoff is always higher than that of pool punishment in existing studies and roughly comparable to that of peer punishment in existing studies. The results of this study provide new insights that the claim of the existing study is not always correct; that is, even if antisocial punishment is possible, it does not have an evolutionary advantage, and cooperators always become advantageous, which in turn solves the problem of antisocial punishment. This study is being conducted as part of efforts to improve specialized education at Kanagawa Institute of Technology. Read the full article at: www.sciencedirect.com

|

Scooped by

Complexity Digest

April 7, 4:07 PM

|

Tim Pollmann and Jochen Staudacher Complexities 2026, 2(1), 7

Shapley values are the most widely used point-valued solution concept for cooperative games and have recently garnered attention for their applicability in explainable machine learning. Due to the complexity of Shapley value computation, users mostly resort to Monte Carlo approximations for large problems. We take a detailed look at an approximation method grounded in multilinear extensions proposed in 2021 under the name “Owen sampling”. We point out why Owen sampling is biased and propose unbiased alternatives based on combining multilinear extensions with stratified sampling and importance sampling. Finally, we discuss empirical results of the presented algorithms for various cooperative games, including real-world explainability scenarios.

Read the full article at: www.mdpi.com

|

Scooped by

Complexity Digest

April 4, 6:52 PM

|

Adams, Fred C.

Both the fundamental constants that describe the laws of physics and the cosmological parameters that determine the properties of our universe must fall within a range of values in order for the cosmos to develop astrophysical structures and ultimately support life. This paper reviews the current constraints on these quantities. The discussion starts with an assessment of the parameters that are allowed to vary. The standard model of particle physics contains both coupling constants (α ,αs ,αw) and particle masses (mu ,md ,me) , and the allowed ranges of these parameters are discussed first. We then consider cosmological parameters, including the total energy density of the universe (Ω) , the contribution from vacuum energy (ρΛ) , the baryon-to-photon ratio (η) , the dark matter contribution (δ) , and the amplitude of primordial density fluctuations (Q) . These quantities are constrained by the requirements that the universe lives for a sufficiently long time, emerges from the epoch of Big Bang Nucleosynthesis with an acceptable chemical composition, and can successfully produce large scale structures such as galaxies. On smaller scales, stars and planets must be able to form and function. The stars must be sufficiently long-lived, have high enough surface temperatures, and have smaller masses than their host galaxies. The planets must be massive enough to hold onto an atmosphere, yet small enough to remain non-degenerate, and contain enough particles to support a biosphere of sufficient complexity. These requirements place constraints on the gravitational structure constant (αG) , the fine structure constant (α) , and composite parameters (C⋆) that specify nuclear reaction rates. We then consider specific instances of possible fine-tuning in stellar nucleosynthesis, including the triple alpha reaction that produces carbon, the case of unstable deuterium, and the possibility of stable diprotons. For all of the issues outlined above, viable universes exist over a range of parameter space, which is delineated herein. Finally, for universes with significantly different parameters, new types of astrophysical processes can generate energy and thereby support habitability. Read the full article at: ui.adsabs.harvard.edu

|

Scooped by

Complexity Digest

April 2, 7:50 PM

|

Steven D Shaw, Gideon Nave People increasingly consult generative artificial intelligence (AI) while reasoning. As AI becomes embedded in daily thought, what becomes of human judgment? We introduce Tri-System Theory, extending dual-process accounts of reasoning by positing System 3: artificial cognition that operates outside the brain. System 3 can supplement or supplant internal processes, introducing novel cognitive pathways. A key prediction of the theory is "cognitive surrender"-adopting AI outputs with minimal scrutiny, overriding intuition (System 1) and deliberation (System 2). Across three preregistered experiments using an adapted Cognitive Reflection Test (N = 1,372; 9,593 trials), we randomized AI accuracy via hidden seed prompts. Participants chose to consult an AI assistant on a majority of trials (>50%). Relative to baseline (no System 3 access), accuracy significantly rose when AI was accurate and fell when it erred (+25/-15 percentage points; Study 1), the behavioral signature of cognitive surrender (AI-Accurate vs. AI-Faulty contrast; Cohen's h = 0.81). Engaging System 3 also increased confidence, even following errors. Time pressure (Study 2) and per-item incentives and feedback (Study 3) shifted baseline performance but did not eliminate this pattern: when accurate, AI buffered time-pressure costs and amplified incentive gains; when faulty, it consistently reduced accuracy regardless of situational moderators. Across studies, participants with higher trust in AI and lower need for cognition and fluid intelligence showed greater surrender to System 3. Tri-System Theory thus characterizes a triadic cognitive ecology, revealing how System 3 reframes human reasoning and may reshape autonomy and accountability in the age of AI. Read the full article at: papers.ssrn.com

|

Scooped by

Complexity Digest

March 29, 12:53 PM

|

Thomas F. Varley, Josh Bongard

The study of complex systems has produced a huge library of different descriptive statistics that scientists can use to describe the various emergent patterns that characterize complex systems. The problem of engineering systems to display those patterns from first principles is a much harder one, however, as a hallmark of complexity is that macro-scale emergent properties are often difficult to predict from micro-scale features. Here, we propose a general optimization-based pipeline to automate the difficult problem of engineering emergent features by re-purposing descriptive statistics as loss functions, and letting a gradient descent optimizer do the hard work of designing the relevant micro-scale features and interactions. Using Kuramoto systems of coupled oscillators as a test bed, we show that our approach can reliably produce systems with non-trivial global properties, including higher-order synergistic information, multi-attractor metastability, and meso-scale structures such as modules and integrated information. We further show that this pipeline can also account for and accommodate constraints on the system properties, such as the costs of connections, or topological restrictions. This work is a step forward on the path moving complex systems science from a field predicated largely on description and post-hoc storytelling towards one capable of engineering real-world systems with desirable emergent meso-scale and macro-scale properties. Read the full article at: arxiv.org

|

Scooped by

Complexity Digest

March 15, 4:39 PM

|

Vicky Chuqiao Yang, James Holehouse, Hyejin Youn, José Ignacio Arroyo, Sidney Redner, Geoffrey B. West, and Christopher P. Kempes PNAS 123 (7) e2509729123 Diversification and specialization are central to complex adaptive systems, yet overarching principles across domains remain elusive. We introduce a general theory that unifies diversity and specialization across disparate systems, including microbes, federal agencies, companies, universities, and cities, characterized by two key parameters. We show from extensive data that function diversity scales with system size as a sublinear power law-resembling Heaps’ law-in all but cities, where it is logarithmic. Our theory explains both behaviors and suggests that function creation depends on system goals and structure: federal agencies tend to ensure functional coverage; cities slow new function growth as old ones expand, and cells occupy an intermediate position. Once functions are introduced, their growth follows a remarkably universal pattern across all systems. Read the full article at: www.pnas.org

|

Scooped by

Complexity Digest

March 13, 2:40 PM

|

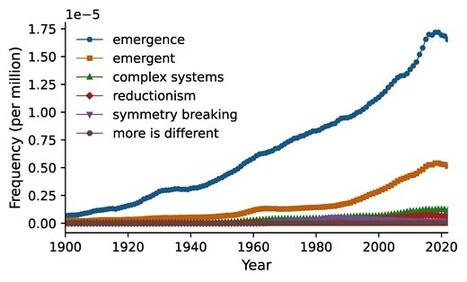

Abbas K Rizi PNAS Nexus, Volume 5, Issue 2, February 2026, pgag010, The term emergence is increasingly used across scientific disciplines to describe phenomena that arise from interactions among a system's components but cannot be readily inferred by examining those components in isolation. While often invoked to explain higher-level behaviors—such as flocking, synchronization, or collective intelligence—the term is frequently used without precision, sometimes giving rise to ambiguity or even mystique. In this perspective paper, I clarify the scientific meaning of emergence as a measurable and physically grounded phenomenon. Through concrete examples—such as temperature, magnetism, and herd immunity in social networks—I review how collective behavior can arise from local interactions that are constrained by global boundaries. By refining the concept of emergence, it is possible to gain a clearer and more grounded understanding of complex systems. My goal is to show that emergence, when properly framed, offers not mysticism, but rather insight. Read the full article at: academic.oup.com

|

Scooped by

Complexity Digest

March 12, 9:25 AM

|

Sara Imari Walker

One of the longest standing open problems in science is how life arises from non-living matter. If it is possible to measure this transition in the lab, then it might be possible to understand the physical mechanisms by which the emergence of life occurs, which so far have evaded scientific understanding. A significant hurdle is the lack of standards or a framework for cross comparison across different experimental contexts and planetary environments. In this essay, I review current challenges in experimental approaches to origin of life chemistry, focusing on those associated with quantifying experimental selectivity versus de novo generation of molecular complexity, and I highlight new methods using molecular assembly theory to measure molecular complexity. This metrology-centered approach can enable rigorous testing of hypotheses about the cascade of major transitions in molecular order marking the emergence of life, while potentially bridging traditional divides between metabolism-first and genetics-first scenarios. Grounding the study of life's origins in measurable complexity has significant implications for the search for life beyond Earth, suggesting paths toward theory-driven detection of biological complexity in diverse planetary contexts. As the field moves forward, standardized measurements of molecular complexity may help unify currently disparate approaches to understanding how matter transforms to life. Much remains to be done in this exciting frontier. Read the full article at: arxiv.org

|

Scooped by

Complexity Digest

March 11, 2:32 PM

|

Costolo, Michael This paper introduces a constraint-limited model of combinatorial growth that examines how feasibility scales with increasing system dimensionality. The framework analyzes the balance between expanding possibility spaces and constraint structures that prune feasible configurations. The model shows that when feasible configurations grow as c^n within a combinatorial space of size 2^n, the feasible fraction collapses for constant c < 2. Sustained novelty generation therefore requires c(n) to approach the combinatorial base, producing a narrow “complexity corridor” between regimes of trivial repetition and combinatorial sparsity. The paper derives the analytic structure of this corridor and explores it through numerical simulations and visualizations. The results suggest a possible structural explanation for why complex systems may emerge only within a narrow range where combinatorial expansion and constraint relaxation operate at comparable scales. The manuscript includes the full mathematical derivation, simulation results, and discussion of implications for complex systems. Read the full article at: zenodo.org

|

Your new post is loading...

Your new post is loading...