Your new post is loading...

Your new post is loading...

|

Scooped by

Nicolas Weil

May 14, 2012 5:18 AM

|

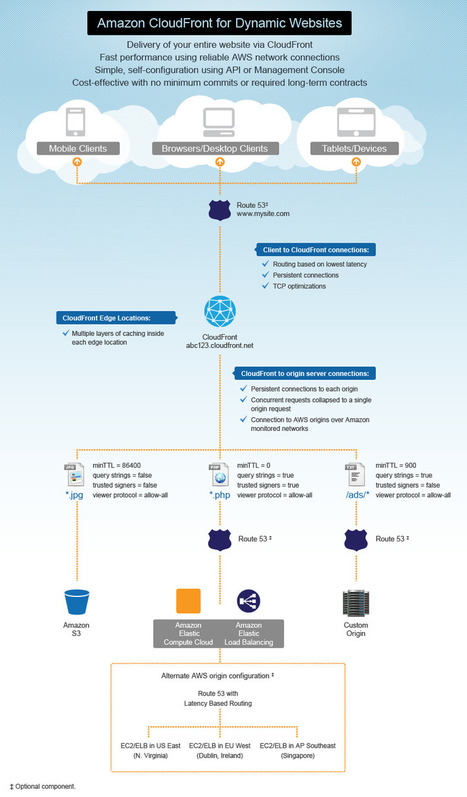

In the past three and a half years, Amazon CloudFront has changed the content delivery landscape. It has demonstrated that a CDN does not have to be complex to use with expensive contracts, minimum commits, or upfront fees, such that you are forcibly locked into a single vendor for a long time. CloudFront is simple, fast and reliable with the usual pay-as-you-go model. With just one click you can enable content to be distributed to the customer with low latency and high-reliability. Today Amazon CloudFront has taken another major step forward in ease of use. It now supports delivery of entire websites containing both static objects and dynamic content. With these features CloudFront makes it as simple as possible for customers to use CloudFront to speed up delivery of their entire dynamic website running in Amazon EC2/ELB (or third-party origins), without needing to worry about which URLs should point to CloudFront and which ones should go directly to the origin.

|

Scooped by

Nicolas Weil

May 7, 2012 5:09 AM

|

When Red Hat puffed up the OpenShift platform cloud last May, it was only available as a service and it only ran atop Amazon's EC2 compute cloud. But now, with the release of the source code behind OpenShift, which is now called OpenShift Origin, developers can grab the platform cloud and plunk it atop other cloud fabrics – including OpenStack, which Red Hat and IBM formally endorsed several weeks ago after the OpenStack community got its governance issues sorted to the liking of Shadowman and Big Blue. From the look of the specs, Red Hat seems to have opened up all of the code for the OpenShift stack, not just the OpenShift Express variant, which was the entry edition of the product that ran on EC2 and that was made freely available by Red Hat so it could get developers to try out its alternative to VMware's Cloud Foundry.

|

Scooped by

Nicolas Weil

April 23, 2012 11:29 AM

|

Kaazing today announced that its enterprise-class HTML5 WebSocket Platform is now available as a service on Amazon EC2 (Elastic Compute Cloud). For the first time, any individual or organization planning to offer a 'living web' application for gaming, retail, financial, mobile and many other markets can reap the real-time benefits of the Kaazing platform and the new WebSocket standard, and get the security and scalability required for fast moving and growing business environments.

|

Scooped by

Nicolas Weil

April 10, 2012 6:28 AM

|

A question I get asked a lot is “what can I do with Mule?” For anyone who has looked at proprietary middleware vendors, ESB is often categorized as a mediation engine and nothing else. They do this to sell more products. Our philosophy is that an integration platform should do a lot more. An integration platform often becomes the central nervous system for applications to talk to, and respond to each other. As such, you need a platform that covers different use cases and provides and end-to-end solution for those use cases. So this question isn’t just about Mule. It’s “what should I expect from my integration platform?” Today, I’m going to introduce some scenarios : - Application Orchestration - API layer - Legacy System Modernization - Enterprise Service Bus - Cloud Integration

|

Scooped by

Nicolas Weil

April 2, 2012 6:19 PM

|

I often think it's ironic that while the mission of REST is to simplify Web development, REST itself is beset with seemingly complex jargon and architecture patterns. I say "seemingly complex" because, once you look into REST architecture in depth, it actually is simple. In some ways, it's almost too simple. It's easy to rack your brains about some REST pattern, but then realize: It's just how the Web works. I'm reminded of the line from Moliere about the bourgeois gentleman who spends years trying to understand how he could speak in "prose", then he exclaims "Good heavens! For more than forty years I have been speaking prose without knowing it". So it is with REST. HTTP verbs, URLs, and resources are just what we've been doing for years. There are various levels of REST though, most famously categorized in the Richardson Maturity Model. At the top of this maturity model is HATEOAS. Actually I think "maturity model" is a bit of a misleading name, because ideally you don't want to start with a very brittle URL and then "mature" it to HATEOAS, since that puts undue requirements onto your client applications. It is better to start with HATEOAS principles from the start.

|

Scooped by

Nicolas Weil

March 20, 2012 2:08 PM

|

Cloud platform provider Akamai Technologies introduced Terra Alta, an enterprise-class solution designed to address the evolving complexities of application acceleration in the cloud. Terra Alta helps simplify the process of developing and deploying applications in the cloud, making it easier to optimize hundreds or thousands of applications with greater flexibility and control. Terra Alta has also been designed to enable an increasingly mobile enterprise user base, and is available with packages supporting sets of three, five and 10 applications.

Features designed to deliver acceleration capabilities across a broad set of Web applications include Web de-duplication, designed to ensure that only differences in the objects that have already been sent by the origin are delivered, reducing bandwidth consumption required for application delivery; Akamai Instant, which retrieves what are assessed as the most likely pages to be next requested by the user; and dynamic page caching, which allows pages that were previously considered dynamic and un-cacheable to be conditionally cached.

|

Scooped by

Nicolas Weil

March 17, 2012 6:00 AM

|

We know that Linux on servers is big and getting bigger. We also knew that Linux, thanks to open-source cloud programs like Eucalyptus and OpenStack, was growing fast on clouds. What he hadn’t know that Amazon’s Elastic Compute Cloud (EC2), had close to half-a-million servers already running on a Red Hat Linux variant.

Huang Liu, a Research Manager with Accenture Technology Lab with a Ph.D. in Electrical Engineering whose has done extensive work on cloud-computing, analyzed EC2’s infrastructure and found that Amazon EC2 is currently made up of 454,400 servers.

|

Scooped by

Nicolas Weil

March 17, 2012 5:53 AM

|

Presentation by David S.Linthicum (Blue Mountain Labs)

|

Scooped by

Nicolas Weil

January 4, 2012 8:05 PM

|

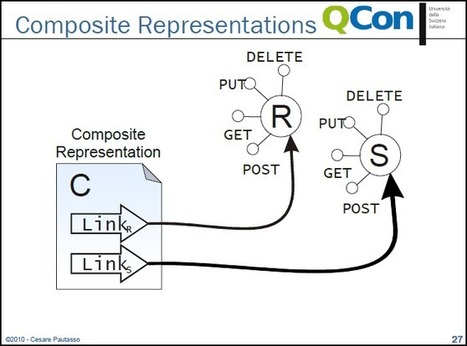

RESTful Business Process Management

Next generation Web services technologies challenge the assumptions made by current standards for process-based service composition. For example, most existing RESTful Web service APIs cannot natively be composed using the WS-BPEL standard.

In this talk we discuss the conceptual relationship between business processes and stateful resources with the goal of enabling lightweight access to service compositions published with a RESTful API. We show that the uniform interface and the hyper-linking capabilities of RESTful services provide an excellent abstraction for publishing as a resource and exposing in a controlled way the execution state of business processes.

READ THE PRESENTATION HERE : http://qconlondon.com/dl/qcon-london-2010/slides/CesarePautasso_RESTfulBusinessProcessManagement.pdf

|

Scooped by

Nicolas Weil

November 30, 2011 4:30 PM

|

We’ve just passed the ten-year anniversary of the first publication of the Agile Manifesto, which laid a philosophical and best-practices foundation for tighter and more effective collaboration between business and technology folks. While Agile has been around more than a decade, I’m finding lately there has been more discussion than ever on how it fits into today’s digital economy. There are extensions, if you will, into business intelligence and data integration (Agile BI and Agile DI). It’s vital to efforts to pursue “Lean IT.” The relationship between Agile and service oriented architecture seems like a natural fit — both philosophies focus on making technology more flexible and adaptable to changing business requirements. But where and how, exactly, do Agile and SOA come together?

|

Scooped by

Nicolas Weil

November 27, 2011 1:54 PM

|

When you deal with resource state in the Cloud, therefore, you’re working at the persistence tier, where traditional approaches to scalability like database sharding and traditional approaches to reliability like replication and caching work reasonably well. There are limitations on Cloud persistence, however; don’t expect to achieve two-phase commit levels of reliability, because the Cloud’s inherent partition tolerance and availability prevent it from exhibiting immediate dataconsistency. Data within a particular Cloud instance, however, are still internally consistent. Application state is a different matter. Treating application state as though it were resource state—writing your application state to your database every time a customer does anything with their shopping cart—limits your scalability, elasticity, and reliability. Don’t go there if you can avoid it. Instead, you want hypermedia to be the engine of application state. In other words, your stateless application instance must give the client everything it needs to know in order to work its way through the purchasing process, and the client maintains the application state for the entire process. You don’t need to spawn a stateful shopping cart instance on the server every time a customer hits your application, since after all, the more users you have, the more clients you have. Why not let the client do the work?

|

Scooped by

Nicolas Weil

November 23, 2011 2:29 AM

|

Recently I've been writing an application which both produces and consumes data via REST. It's been a while since I've done anything like this, and of course the tools and technology have moved on. I wanted to share a couple of useful tools that I've come across along the way. Both of these have come in very handy in developing and debugging my application : REST-assured and the Chroe REST console.

|

Scooped by

Nicolas Weil

November 19, 2011 3:18 AM

|

Earlier this month a talk by Thomas Erl (bestseller author on SOA and Cloud Computing) on SOA, Cloud Computing and Semantic Web technologies became available on the Arcitura Youtube channel. This talk gives a less than 30 minutes overview in how these work together. It has a focus on highlight promissing areas of synergy.

|

|

Scooped by

Nicolas Weil

May 7, 2012 8:34 AM

|

|

Scooped by

Nicolas Weil

May 4, 2012 11:40 AM

|

The foundation of BPM includes time-tested technologies for managing both workflow and system interaction. Business processes manage the operational flow of business and when optimized achieve cost containment and flexibility as they need to be efficient and able to adapt to changing business conditions. The art of planning and implementing process management requires all the best cross-functional project management skills one can provide and tools that facilitate the task. Yet when trying to improve the management of processes, the business is often constrained by tool limitations that impose additional artificial barriers that impede success. These barriers result from design considerations and limitations of the capabilities, performance, and scalability. This article details these barriers to BPM and what is required to minimize or eliminate them.

|

Scooped by

Nicolas Weil

April 12, 2012 4:30 PM

|

Open ... and Shut Many of the benefits of cloud computing are lost in translation as enterprises attempt to force the "new wine" of cloud's flexibility into the "old bottles" of traditional data centers. By running a cloud environment within one's data center, the full benefits of infinitely scalable and flexible infrastructure fade, as Amazon has argued and as Cedexis, a French company with a growing roster of big-name customers, aims to prove. Just as many parents seem to think their kids are above average, many IT professionals delude themselves into thinking their infrastructure – cloudy or otherwise – is high-performance. But often this isn't the case. This may be particularly true of the web, even despite content delivery networks (CDN) like Akamai and Limelight that help IT professionals juice their websites to run as quickly and efficiently as possible.

|

Scooped by

Nicolas Weil

April 4, 2012 2:59 PM

|

n today’s world, HTTP APIs can be classified into - Web Services - RPC URI Tunnelling - HTTP-based Type 1 - HTTP-based Type 2 - REST Here is a very good explanation by Jon Algermissen. Unfortunately, every API provider calls their service as “RESTful” despite otherwise. So what really is a RESTful server? Any API server that is hypertext/hypermedia driven is RESTful. That means, the developer/client application should be able to discover “other available resources” from the API’s root URL. This, is in fact the most important constraint for implementing RESTful APIs. Additionally, a pure RESTful server is one that adheres to HATEOAS constraint.

|

Scooped by

Nicolas Weil

March 28, 2012 12:12 PM

|

Talk given by Adrian Crockcroft at SVForum, Sunnyvale CA, March 27th 2012...

|

Scooped by

Nicolas Weil

March 19, 2012 1:39 PM

|

To support this combination of huge traffic and unpredictable demand spikes, Netflix has spent the past few years developing a global video distribution system using the Amazon Web Services (AWS) cloud. By outsourcing to Amazon, the company says it has been able to save on the costs of maintaining and updating a datacentre infrastructure and can react better to demand. However, Netflix cloud architect Adrian Cockcroft still has a number of items on his wishlist — chiefly, faster input-output mechanisms — which are lacking in the cloud.

|

Scooped by

Nicolas Weil

March 17, 2012 5:56 AM

|

CloudStack is an open source cloud orchestration platform that essentially allows service providers to set up on-demand, elastic cloud computing services that function like Amazon EC2. If you've heard of Rackspace's OpenStack, you could consider CloudStack a direct competitor. And just for some background, CloudStack is a Citrix initiative that was born out of their acquisition of Cloud.com. The most prominent user of CloudStack is Zynga.

There's a fine review of Cloudstack on shapeblue.com and I'm going to provide the TL;DR version and just go over the main strengths of this platform that set it apart from its only apples-to-apples competitor: OpenStack.

Read more here : http://www.shapeblue.com/2012/02/23/citrix-cloudstack-30-review/

|

Scooped by

Nicolas Weil

March 17, 2012 5:24 AM

|

OASIS (The Organization for the Advancement of Structured Information Standards) recently released details about their new initiative that aims to create open standards for the portability of cloud services and applications. This effort, the Topology and Orchestration Specification for Cloud Applications or 'TOSCA,' will allow for easier deployment of cloud applications that have the proper requirements for security, governance, and compliance without worrying about being locked into a vendor. This will let the operational behavior of services to become independent of the cloud provider or the technology used to host the service.

|

Scooped by

Nicolas Weil

January 1, 2012 4:09 PM

|

For businesses, APIs are clearly evolving from a nice-to-have to a must-have. Externalization of back-end functionality so that apps can interact with systems, not just people, has become critical.

As we move into 2012, several API trends are emerging : - Enterprise APIs becoming mainstream - API-centric architectures will be different from portal-centric or SOA-centric architectures - Data-centric APIs increasingly common - Many enterprises will implement APIs just to get analytics - APIs optimized for the mobile developer - OAuth 2.0 as the default security model

|

Scooped by

Nicolas Weil

November 29, 2011 7:25 AM

|

|

Scooped by

Nicolas Weil

November 27, 2011 1:47 PM

|

In today's world, companies are participating in highly collaborative ecosystems providing their specific expertise to create end-to-end services. This will become more important in the future. SOA and Web 2.0 were milestone developments in the IT industry, while Business Process Management (BPM) has been a major step toward standardized business services automation. With cloud computing, standards and technological developments come together to create an environment in which integrated business processes are supported by software services performed within and between enterprises.

|

Scooped by

Nicolas Weil

November 20, 2011 3:17 AM

|

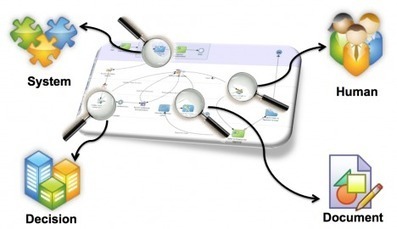

We have quite a rigorous SOA software development process however the full value of the collected information is not being realized because the artifacts are stored in disconnected information silos. So far attempts to introduce tools which could improve the situation (e.g. zAgile Teamwork and Semantic Media Wiki) have been unsuccessful, possibly because the value of a Linked Data approach is not yet fully appreciated. To provide an example Linked Data view of the SOA services and their associated artifacts I created a prototype consisting of Sesame running on a Tomcat server with Pubby providing the Linked Data view via the Sesame SPARQL end point. TopBraid was connected directly to the Sesame native store (configured via the Sesame Workbench) to create a subset of services sufficient to demonstrate the value of publishing information as Linked Data. In particular the prototype showed how easy it became to navigate from the requirements for a SOA service through to details of its implementation. The prototype also highlighted that auto generation of the RDF graph (the data providing the Linked Data view) from the actual source artifacts would be preferable to manual entry, especially if this could be transparently integrated with the current software development process. This is has become the focus of the next step, automated knowledge extraction from the source artifacts.

|

Your new post is loading...

Your new post is loading...