Your new post is loading...

Your new post is loading...

The modern world is complex beyond human understanding and control. The science of complex systems aims to find new ways of thinking about the many interconnected networks of interaction that defy traditional ...

Real world network datasets often contain a wealth of complex topological information. In the face of these data, researchers often employ methods to extract reduced networks containing the most important structures or pathways, sometimes known as `skeletons' or `backbones'. Numerous such methods have been developed. Yet data are often noisy or incomplete, with unknown numbers of missing or spurious links. Relatively little effort has gone into understanding how salient network extraction methods perform in the face of noisy or incomplete networks. We study this problem by comparing how the salient features extracted by two popular methods change when networks are perturbed, either by deleting nodes or links, or by randomly rewiring links. Our results indicate that simple, global statistics for skeletons can be accurately inferred even for noisy and incomplete network data, but it is crucial to have complete, reliable data to use the exact topologies of skeletons or backbones. These results also help us understand how skeletons respond to damage to the network itself, as in an attack scenario. Robustness of skeletons and salient features in networks

Louis M. Shekhtman, James P. Bagrow, Dirk Brockmann http://arxiv.org/abs/1309.3797

Via Complexity Digest, Eugene Ch'ng

Standford Medicine Summer 2013 Special Report

In this paper we argue that if we want to find a more satisfactory approach to tackling the major socio-economic problems we are facing, we need to thoroughly rethink the basic assumptions of macroeconomics and financial theory. Making minor modifications to the standard models to remove “imperfections” is not enough, the whole framework needs to be revisited. Dirk Helbing and Alan Kirman: Rethinking Economics Using Complexity Theory http://www.soms.ethz.ch/paper_economics_complexity_theory

Via Complexity Digest

The availability of large data sets have allowed researchers to uncover complex properties such as large scale fluctuations and heterogeneities in many networks which have lead to the breakdown of standard theoretical frameworks and models. Until recently these systems were considered as haphazard sets of points and connections. Recent advances have generated a vigorous research effort in understanding the effect of complex connectivity patterns on dynamical phenomena. For example, a vast number of everyday systems, from the brain to ecosystems, power grids and the Internet, can be represented as large complex networks. This new and recent account presents a comprehensive explanation of these effects.

Via Complexity Digest

Complex Systems are made up of numerous interacting sub-components. Non-linear interactions of these components or agents give rise to emergent behavior observable at the global scale. Agent-based modeling

and simulation is a proven paradigm which has previously been used for effective computational modeling of complex systems in various domains. Because of its popular use across different scientific domains, research in agent-based modeling has primarily been

vertical in nature.

The goal of this book is to provide a single hands-on guide to developing cognitive agent-based models for the exploration of emergence across various types of complex systems. We present practical ideas and

examples for researchers and practitioners for the building of agent-based models using a horizontal approach - applications are demonstrated in a number of exciting domains as diverse as wireless sensors networks, peer-to-peer networks, complex social systems,

research networks and epidemiological HIV. Cognitive Agent-based Computing-I A Unified Framework for Modeling Complex Adaptive Systems using Agent-based & Complex Network-based Methods

Series: SpringerBriefs in Cognitive Computation

Niazi, Muaz A, Hussain, Amir

Via Complexity Digest

The social sciences have sophisticated models of choice and equilibrium but little understanding of the emergence of novelty. Where do new alternatives, new organizational forms, and new types of people come from? Combining biochemical insights about the origin of life with innovative and historically oriented social network analyses, John Padgett and Walter Powell develop a theory about the emergence of organizational, market, and biographical novelty from the coevolution of multiple social networks. They demonstrate that novelty arises from spillovers across intertwined networks in different domains. In the short run actors make relations, but in the long run relations make actors. This theory of novelty emerging from intersecting production and biographical flows is developed through formal deductive modeling and through a wide range of original historical case studies. Padgett and Powell build on the biochemical concept of autocatalysis--the chemical definition of life--and then extend this autocatalytic reasoning to social processes of production and communication. Padgett and Powell, along with other colleagues, analyze a very wide range of cases of emergence. They look at the emergence of organizational novelty in early capitalism and state formation; they examine the transformation of communism; and they analyze with detailed network data contemporary science-based capitalism: the biotechnology industry, regional high-tech clusters, and the open source community. The Emergence of Organizations and Markets

John F. Padgett, Walter W. Powell Princeton University Press (October 14, 2012)

Via Complexity Digest

Defintely one for the reading list: Environmental governance decisions, like other public policies, are often based upon the assumption that having the ‘correct’ legal principles is the key to describing and prescribing the law. Yet, one of the most inescapable and persistent issues in law is its very concept. Western civil law and common law scholars have sought to understand where, how and in what form law emerges. How do normative orders form legal orders, or juridicities? Moreover, law is not only founded on legal principles but also set within a wider social, economic, cultural as well as environmental context. As interdisciplinary approaches to the study of law expand, disciplines such as the sociology of law and legal anthropology have exposed how dogmatic principles can often be incongruent with the legal reality or law in practice. Instead alternative normative orders seem to emerge, each being complex and imperfect. By contrast, the economic analysis of law generally has sought to view normative behavior through the narrower lens of market exchange in which an ‘invisible hand’ simply guides ‘rational’ agents to the optimal solution. In recent years much has been learned from nonlinear dynamics and complex adaptive systems about how complex and imperfect [normative] behaviors can emerge from simple rules (Miller and Page 2007). The approach taken here unites these seemingly contradictory perspectives in order to portray the emergence of self-organizing governance alternatives. The article proposes a means to structure a multidisciplinary analysis of Corsican governance to better understand the underlining decision-making process and frame the corresponding forces that form legal orders. Imperfect Alternatives and the Invisible Elephant: The Complex Nature of Environmental Governance Jovita De Loatch http://papers.ssrn.com/sol3/papers.cfm?abstract_id=2153128

Via Complexity Digest, Eugene Ch'ng

Thanks to AntonJ and CXBooks for the heads up on this. For me few books, papers and ideas I have ever read have been as long term rewarding as those from John H. Holland. It could be simply that i fell for the ideas underpinning genetic algorithms(GA) when a young student, or that he generously took time to explain some finer points on applying GAs to certain classes of problems to me over the phone when I phoned his office for a copy of a paper when a young researcher. More than likely however it is the fact that that Holland and his cohorts have invented a big chunk of computer science tools and frameworks for thinking about complex systems. Either way a John Holland book is something I look forward to. His new one - Signals and Boundaries is now available. In Signals and Boundaries, Holland argues that understanding the origin of the intricate signal/border hierarchies of complex adaptive systems (cas) such as ecosystems, governments, biological cells, and markets is the key to answering such questions. He develops an overarching framework for comparing and steering cas through the mechanisms that generate their signal/boundary hierarchies. Holland lays out a path for developing the framework that emphasizes agents, niches, theory, and mathematical models. He discusses, among other topics, theory construction; signal-processing agents; networks as representations of signal/boundary interaction; adaptation; recombination and reproduction; the use of tagged urn models (adapted from elementary probability theory) to represent boundary hierarchies; finitely generated systems as a way to tie the models examined into a single framework; the framework itself, illustrated by a simple finitely generated version of the development of a multi-celled organism; and Markov processes. Totally worth reading, chewing on and thinking about for a long time. Click on image or title to learn more.

|

Social systems are among the most complex known. This poses particular problems for those who wish to understand them. The complexity often makes analytic approaches infeasible and natural language approaches inadequate for relating intricate cause and effect. However, individual- and agent-based computational approaches hold out the possibility of new and deeper understanding of such systems. Simulating Social Complexity examines all aspects of using agent- or individual-based simulation. This approach represents systems as individual elements having each their own set of differing states and internal processes. The interactions between elements in the simulation represent interactions in the target systems. What makes these elements "social" is that they are usefully interpretable as interacting elements of an observed society. In this, the focus is on human society, but can be extended to include social animals or artificial agents where such work enhances our understanding of human society. This handbook is intended to help in the process of maturation of this new field. It brings together, through the collaborative effort of many leading researchers, summaries of the best thinking and practice in this area and constitutes a reference point for standards against which future methodological advances are judged.

Via Complexity Digest

ECAL 2013, the twelfth European Conference on Artificial Life, presents the current state of the art of a mature and autonomous discipline collocated at the intersection of a theoretical perspective (the scientific explanations of different levels of life organizations, e.g., molecules, compartments, cells, tissues, organs, organisms, societies, collective and social phenomena) and advanced technological applications (bio-inspired algorithms and techniques to building-up concrete solutions such as in robotics, data analysis, search engines, gaming). Advances in Artificial Life, ECAL 2013 Proceedings of the Twelfth European Conference on the Synthesis and Simulation of Living Systems Edited by Pietro Liò, Orazio Miglino, Giuseppe Nicosia, Stefano Nolfi and Mario Pavone http://mitpress.mit.edu/books/advances-artificial-life-ecal-2013

Via Complexity Digest

Evolutionary information theory is a constructive approach that studies information in the context of evolutionary processes, which are ubiquitous in nature and society. In this paper, we develop foundations of evolutionary information theory, building several measures of evolutionary information and obtaining their properties. These measures are based on mathematical models of evolutionary computations, machines and automata. Evolutionary Information Theory

Mark Burgin Information 2013, 4(2), 124-168; http://dx.doi.org/10.3390/info4020124

Via Complexity Digest

Emerging Microbes and Infections (EMI) is a new open access, fully peer-reviewed journal that will publish the best and most interesting research in emerging microbes and infectious disease.

The domain of nonlinear dynamical systems and its mathematical underpinnings has been developing exponentially for a century, the last 35 years seeing an outpouring of new ideas and applications and a concomitant confluence with ideas of complex systems and their applications from irreversible thermodynamics. A few examples are in meteorology, ecological dynamics, and social and economic dynamics. These new ideas have profound implications for our understanding and practice in domains involving complexity, predictability and determinism, equilibrium, control, planning, individuality, responsibility and so on. Our intention is to draw together in this volume, we believe for the first time, a comprehensive picture of the manifold philosophically interesting impacts of recent developments in understanding nonlinear systems and the unique aspects of their complexity. The book will focus specifically on the philosophical concepts, principles, judgments and problems distinctly raised by work in the domain of complex nonlinear dynamical systems, especially in recent years. Philosophy of Complex Systems Edited by Cliff Hooker

Via Complexity Digest

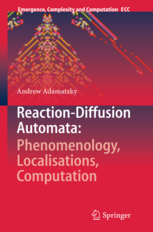

Reaction-diffusion and excitable media are amongst most intriguing substrates. Despite apparent simplicity of the physical processes involved the media exhibit a wide range of amazing patterns: from target and spiral waves to travelling localisations and stationary breathing patterns. These media are at the heart of most natural processes, including morphogenesis of living beings, geological

formations, nervous and muscular activity, and socio-economic developments. This book explores a minimalist paradigm of studying reaction-diffusion and excitable media using locally-connected networks of finite-state machines: cellular automata and automata on proximity graphs. Cellular automata are marvellous objects per se because they show us how to generate and manage complexity using very simple rules of dynamical transitions. When combined with the reaction-diffusion paradigm the cellular automata become an essential user-friendly tool for modelling natural systems and designing future and emergent computing architectures. The book brings together hot topics

of non-linear sciences, complexity, and future and emergent computing. It shows how to discover propagating localisation and perform computation with them in very simple two-dimensional automaton models. Paradigms, models and implementations presented in the book strengthen the theoretical foundations in the area for future and emergent computing and lay key stones towards

physical embodied information processing systems. Reaction-Diffusion Automata:

Phenomenology, Localisations, Computation by Andrew Adamatzky http://www.springer.com/physics/complexity/book/978-3-642-31077-5

Via Complexity Digest

The nature of distributed computation in complex systems has often been described in terms of memory, communication and processing. This thesis presents a complete information-theoretic framework to quantify these operations on information (i.e. information storage, transfer and modification), and in particular their dynamics in space and time. The framework is applied to cellular automata, and delivers important insights into the fundamental nature of distributed computation and the dynamics of complex systems (e.g. that gliders are dominant information transfer agents). Applications to several important network models, including random Boolean networks, suggest that the capability for information storage and coherent transfer are maximised near the critical regime in certain order-chaos phase transitions. Further applications to study and design information structure in the contexts of computational neuroscience and guided self-organisation underline the practical utility of the techniques presented here. "The Local Information Dynamics of Distributed Computation in Complex Systems" Joseph T. Lizier (With foreword by Dr. Mikhail Prokopenko) Springer Theses, Springer: Berlin/Heidelberg, 2013. http://dx.doi.org/10.1007/978-3-642-32952-4

Via Complexity Digest

Jason Goldberg is the founder & Chief Executive Officer at Fab.com. He and his cofounder have built Fab.com from a near failed start up which they pivoted into a major online design goods outlet business with a run-rate of more than $150 million in sales in 15 months. Two years go Jason posted a note on the 57 things he had learned in building start ups and pivoting Fab.com. He updated that last week to the 90 things he has learned since. If you are involved in a start up - its a tad longer than HP's 10 golden rules - but its has some good advice throughout the list. Worth a read if you are invovled in start ups or managing a business.

|

Your new post is loading...

Your new post is loading...

Your new post is loading...

Your new post is loading...

One for the reading list for certain.