Your new post is loading...

Your new post is loading...

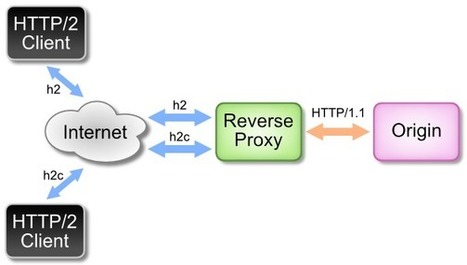

We are pleased to announce the launch of our Adaptive Media Streaming over HTTP/2 trial. We are inviting you to participate in order to help us gather important data.

The experimental HTTP version 2 protocol promises to improve the web browsing experience but what does it mean for HTTP-based media streaming technologies such as MPEG-DASH?

Could MPEG-DASH be the one online video format to replace all others? In a Streaming Forum 2014 panel on the much-hyped format heavyweights includingCisco, Akamai, the BBC, and Qualcomm offered a shared hope that the industry could standardize behind DASH.

“To me, it’s the young Turk,” said Kevin Murray, system architect for Cisco, comparing DASH to HLS. Broadcasters are slowly centralizing on both options, he noted. DASH, however, lacks a maturity. The format still needs ubiquity (including the ability to play on iOS devices) and integration (DASH-IF needs to act as a gatekeeper). Keep it simple, Murray advised: A unified DASH is easier to deploy and test, and offers a better user experience.

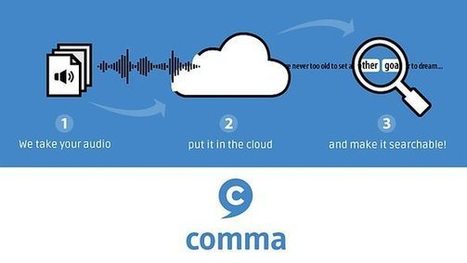

One of the biggest challenges for the BBC Archive is how to open up our enormous collection of radio programmes. As we’ve been broadcasting since 1922 we’ve got an archive of almost 100 years of audio recordings, representing a unique cultural and historical resource.

But the big problem is how to make it searchable. Many of the programmes have little or no meta-data, and the whole collection is far too large to process through human efforts alone.

Help is at hand. Over the last five years or so, technologies such as automated speech recognition, speaker identification and automated tagging have reached a level of accuracy where we can start to get impressive results for the right type of audio. By automatically analysing sound files and making informed decisions about the content and speakers, these tools can effectively help to fill in the missing gaps in our archive’s meta-data.

BBC R&D decided to develop these automatic meta-data extraction technologies in a way that would allow large-scale audio processing. Building them into a cloud-based platform (more on this later) allows us to work through very large archives quickly, cheaply and many times over.

At Streaming Media West, BBC senior technical architect Stephen Godwin gave an in-depth look at the broadcaster's new Video Factory workflow.

With the popularity of iPlayer, the BBC found itself facing a challenge of creating a new and better ingest and transcoding workflow system. The new system is called Video Factory. “Video Factory is designed to be scalable from the ground up, and to use the cloud,” said Godwin. “We wanted something that would scale,” said Godwin. “The system we were replacing was 4-5 years old, and a hardware solution, and we didn’t plan for it to support smartphones. On the old system, we started running into limits, including storage limits.” ”When we designed the new system, we wanted a system that would scale in capability and price,” said Godwin. “The old system has some single-points-of-failure. We wanted to move to a model where we had the resiliency of the broadcast chain.”

Building for Connected TV is complicated

The TV Application Layer originated from our ambition to run BBC iPlayer, News and Sport applications for Connected TVs on as many different devices as possible. There are hundreds of different devices in the marketplace and they all use slightly different technology to achieve the same result. Having figured out how to build an application on a specific device we want to use this knowledge to build additional applications for that device. Our answer to this challenge is the TV Application Layer (TAL). By abstracting the differences between devices and creating a number of TV-specific graphical building blocks (like carousels, data grids and lists), we provide a platform upon which we can build our applications.

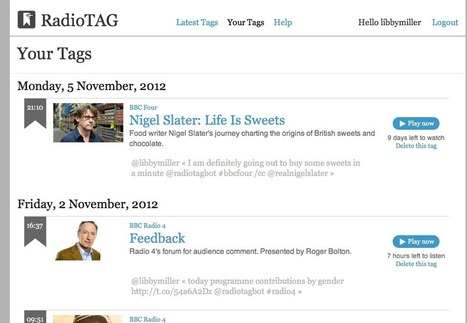

Radiotagbot is a way to bookmark the current point in a programme that you're listening to (or watching) live, by using twitter. To create a bookmark, you send a message to @radiotagbot with the name of a BBC radio or TV station in in it (e.g. "radio 4" or "r1x" or "BBC1") and it will tweet back to you with the title of the programme and a link to the point in time on iPlayer at which it will (usually) appear. If it's on Radio 1, 1x, 2, 3 or 6 Music, it will also attempt to reply with the music track playing.

This paper describes capture and web delivery of free-viewpoint video (FVV). FVV allows the viewer to freely change the viewpoint. This is particularly attractive to view and analyse sport incidents. Based on previous work on the capture and replay of sport events for TV programme making we present a FVV player based on the WebGL API, which is part of HTML 5. The player implements a streaming mode over IP and an image-based rendering using view-dependent texture mapping. This paper was presented at the NEM Summit, Istanbul, 16-18th October 2012, and was given the Best Paper award. The slides shown during the presentation are included as an appendix.

Today the BBC iPlayer, News and Sport apps are available on an astonishing 650 connected TV devices, from internet-enabled or Smart TVs and set-top-boxes to media players and games consoles, delivering more than 45 million videos to 2 million users every month. Most recently, the BBC Sport app has been used by more than 200,000 users a day to watch the phenomenal London 2012 Olympic Games coverage on connected TVs alone, having only launched a few short weeks before. While this is a remarkable achievement in itself, it certainly wasn't easy or straightforward, and I would like to share with you what challenges we have encountered, what we have learnt in the process of solving them and what we believe is important to consider for anyone looking at building applications for connected TVs.

The London 2012 Olympics is remarkable for its television coverage in many ways, not least the use of an ultra-high-definition system called Super Hi-Vision, developed by the Japanese national broadcaster NHK and demonstrated in conjunction with the BBC. Promoted as the future of television, it has sixteen times the resolution of a high-definition image. Seen by informitv on an 8-metre wide screen at BBC Broadcasting House in London, the picture quality is phenomenal. At 7680 x 4320 pixels, the 8K UHDTV2 image has a resolution of 33 megapixels. The projected result is rather like looking through a window direct to the venue, supported by an immersive 22.2 channel surround sound system. The coverage of the opening ceremony put the audience in the best seats in the stadium and allowed them to survey the scene, taking in every detail. Whereas television traditionally cuts from shot to shot in order to provide continuous visual novelty, the wide static shots enabled the viewer to explore the image as if they were actually present. This was partly because of the limited number of camera positions, but also suited the aesthetic of the big screen presentation.

The FascinatE project is developing a system to allow end-users to interactively view and navigate around an ultra-high resolution video panorama showing a live event, with the accompanying audio automatically changing to match the selected view. The output will be adapted to their particular kind of device, covering anything from a mobile handset to an immersive panoramic display with spatial audio. At the production side, this requires the development of new audio and video capture systems, and scripting systems to control the shot framing options presented to the viewer. Intelligent networks with processing components are being developed to repurpose the content to suit different device types and framing selections, and prototypes of user terminals supporting innovative interaction methods are being built to allow viewers to control and display the content.

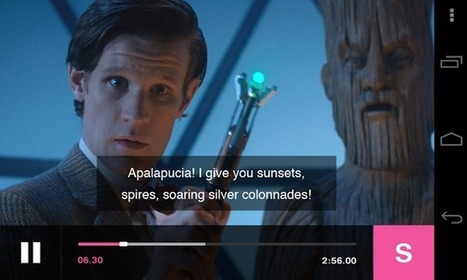

The BBC is going to use companion screen apps to enhance the enjoyment of programmes but also introduce audiences to what can often be a wealth of programme related information and interactivity online. The broadcaster will launch its first companion screen app this September in the form of a play-along game for Antiques Roadshow, a gentle Sunday night affair where the public bring family treasures for experts to analyse and value. The BBC is harnessing the fact that most viewers already try to second-guess the experts with their own valuations. You will be able to play the game whether you are watching live or on-demand. Victoria Jaye, Head of IPTV & TV Online Content at the BBC, used Connected TV Summit last week to make the announcement but also outline the general companion app strategy for the broadcaster. She views show-related companion activities on smartphones, tablets and even the PC as a way to explore new creative opportunities. She made it clear that ownership of the app, in terms of the content and viewer experience, will belong to the production teams and that this is considered crucial. The production department will drive the format, while the technology development team will realise their vision.

Video scene segmentation is often regarded as a primary step with regards to analysis of video data. The process of scene segmentation involves partitioning a video stream into scenes in which each scene is comprised of frames of similar content. This work may form a primary stage of larger system for automated quality control and image restoration that may be conducted in the BBC

New BBC White Paper details research into holographic displays, laser based glasses free 3D screens for multiple viewers and 3D video for mobile.

The BBC R&D team have been working closely with R&D teams across the world on all aspects of 3D TV from capture, postproduction, and coding, to transmission and end-user terminals. In the BBC’s 3D-TV R&D Activities in Europe white paper released last night by Oliver Grau, Thierry Borel, Peter Kauff, Aljoscha Smolic and Ralf Tanger, details of previous and on-going research into 3D for the home and mobile devices are summarised providing a fascinating insight into how 3D entertainment could be consumed in the future.

|

What would a live studio need if it worked directly on IP networks? That was the task BBC R&D set itself with a project that began in 2012 and which will hit a peak when it plays a central role in the world’s first live end-to-end IP production in Ultra HD to be conducted at the Commonwealth Games.

IP has been used by many broadcasters, BBC included, to link a studio to remote locations but the missing piece has been an IP production experience using internet protocols to switch and mix the videos. The BBC R&D trials conducted during the CWG next month promise to do just that, while also testing the limits of network performance by shunting 4K data around the UK in a collaborative production workflow – live.

“The concept is to introduce software and IP into the overall chain so it can be used alongside existing technology like DTT,” said Matthew Postgate, controller, BBC R&D. “IP will enable us to be more flexible with services we already produce, and longer-term, to introduce new kinds of services.”

BBC R&D describes IP Studio as an open source software framework for handling video, audio and data content, composed of off-the-shelf IT components and adhering to standards like IP packet synchronisation protocol IEEE1558.

Building on a research trial during the World Cup, the BBC will use the Commonwealth Games in Glasgow to trial what it calls the world's first live Ultra HD production and transmission entirely in IP.

The BBC prides itself on pioneering media technology, and its renowned research and development team has come up trumps again with what it claims is a world first live UHD production produced and transmitted in an entirely IP domain.

The trial is planned to take place during the Commonwealth Games, a quadrennial international multi-sport event hosted in Glasgow for two weeks from July 23.

It extends the BBC's trial of UHD live feeds from the World Cup in Brazil delivered simultaneously over conventional digital terrestrial and IP networks. The first of three matches delivered in the format for these tests airs on June 28.

From Glasgow, Scotland the BBC intends to replicate the Brazil trial by sending UHD signals across DTT and IP networks in partnership with telco BT and infrastructure provider Arqiva with the feed compressed in HEVC. This will again test the quality of service to a home environment.

Adobe, NBCU, Elemental, Deltatre, LiveU, and more are readying streaming platforms that will deliver coverage to desktops and mobile devices around the globe.

Four years ago according to the IOC there was a defining moment in Olympic broadcasting history. Vancouver was the first Winter Games to be fully embraced on digital media platforms where digital coverage accounted for around half of the overall broadcast output.

Globally, on official rights-holding broadcasters’ internet and mobile platforms, there were more than 265 million video views and in excess of 1.2 billion page views during the games. There were also approximately 6,000 hours of 2010 coverage on mobile phone platforms.

Digital coverage from Sochi will surpass this, with many more broadcasters drawing on the clear consumer demand from London 2012 for any time, any device viewing.

The IOC places such draconian restraints on rights holders and anyone working for them to report involvement in the Olympics, which extends to technology contractors, that it's tricky to unearth details on this story. With that caveat, here are some of the large-scale video streaming activities set to go live from Sochi at the end of this week.

For the 2012 Summer Games in London, the BBC demanded that its live streams be just as resilient as its broadcast channels. Learn how it delivered on that goal. Live video streams were key to the ambitious online user proposition for the London 2012 Olympics, and that coverage had to mirror the very high traditional broadcast standards of resilience and quality. Hear the challenges the BBC faced when designing a resilient HTTP streaming infrastructure that was designed to cope with huge volumes. Learn about the solution the BBC used during the games and hear what changes to their methodology was required to build resilience into a cloud-based infrastructure.

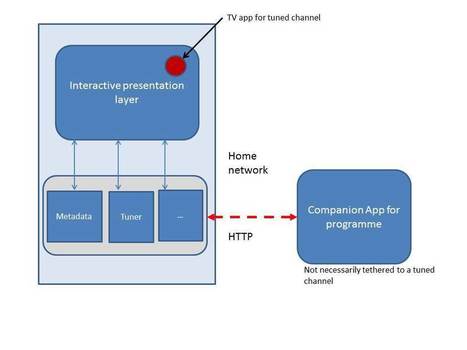

The BBC has called for technology standardisation for second screen services in order to help move the the broadcast sector forwards. Speaking at TV Connect yesterday, BBC senior technologist for mobile and dual screen, Jeremy Kramskoy, said that common standards were important in terms of bringing down development and technology licensing costs. He also said that in the future connected home, when synchronisation will increasingly result in different web-powered devices talking to each other, “standardisation has to happen, otherwise it’s going to be a complete nightmare.”

The BBC Olympics sports broadcast service is one of the largest media events of 2012. It has brought the video service industry to a totally new level, earning for the 2012 Olympics the popularity of "truly digital games". In this context, it is critical to acknowledge the importance of expertise gained and leverage it in further growth. In this post, we are going to dwell into the project, its implementation, and valuable experience that you can apply to your own projects.

It's been a long time since the last series of blogs on Orchestrated Media. Time for a catch-up. Firstly, we've stopped using the term orchestrated media, and instead talk about dual-screen and companionscreen. Dual-screen reflects where things stand currently: the companion service can synchronise against the broadcast content using various technologies. See Steve's blog about that. The BBC's launch of dual screen for Antiques Roadshow is imminent. Looking ahead, we see the next generation of services allowing a wider set of companion services, where the TV, the companion, and the Web, are inter-communicating, allowing a web site or a companion app to both monitor and control the TV. This gives TV -awareness on web-sites, and web-awareness of TV services. Each of these three domains could be the launch-point for companion screen services, and enage the other two domains as needed. Companion screen pertains to this wider role for the companion device, compared to today.

As many of you are aware, we chose Adobe Flash as the media format to stream to Android devices. Doing so provided us with a number of cross platform efficiencies as the same infrastructure can be used for delivery on PCs, Android phones, and set-top boxes. Adobe's strategic decision to remove support for the Flash Player plug-in meant that we had to change the way that we play back this content. [...] We looked at a number of different solutions, for example, Http Live Streaming (HLS) which is used to stream BBC media to other platforms. Unfortunately, HLS isn't supported on Android OS versions prior to Honeycomb. In the end, Flash was still the best choice of media format for us to use. And the only practical technology for us to play this format back on Android is Adobe Air.

Since 1999, the BBC's Red Button feature has delivered alternative camera angles, sports scores and the like over broadcast spectrum, but it's now set to become internet enabled. Channel surfers shouldn't expect a full-blown web experience, however, as the Beebs stresses it's not about to include everything and the kitchen sink in terms of functionality. Rather, their Connected Red Button aims for simplicity. Punching the clicker could bring up the iPlayer to catch previous episodes of shows or save recipes from a cooking program for later viewing on a computer or smartphone. Companion screen experiences such as the Antiques Roadshow app, which is slated for a September release, are also part of their web-connected roadmap.

Our editorial approach to companion experiences is three fold: • Build on existing audience needs and behaviour • Go beyond broadcast • Drive creative renewal and innovation We want to immerse our audience in the programme they're watching even more by building on the existing needs and behaviours the show inspires. We've learned a lot about this from years of programme-related experimentation on BBC Red Button and BBC Online.

Remember when Intel turned your life into a museum exhibition using your Facebook data? Or when Google put your place of birth into Arcade Fire’s The Wilderness Downtown video? How about when Take This Lollipop warned you – specifically you – about the dangers of social networking? That’s ‘Perceptive Media’, and it’s coming to a TV near you – eventually.

At last night’s SMC_MCR event in Manchester, UK, Ian Forrester of the BBC’s Research and Development department discussed early-stage experiments that are being conducted into bringing Perceptive Media to our TV sets.

Here’s how it would work – a TV signal would be sent, as normal, to your set-top box or TV. However, the hardware in your living room would be able to modify that signal with information about you, to create a subtly different version of what you were watching, personalised for you.

|

Your new post is loading...

Your new post is loading...