Your new post is loading...

Your new post is loading...

|

Suggested by

Fil Menczer

|

Bao Tran Truong, Oliver Melbourne Allen & Filippo Menczer

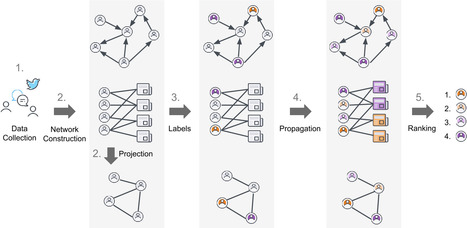

EPJ Data Science volume 13, Article number: 10 (2024) The spread of misinformation poses a threat to the social media ecosystem. Effective countermeasures to mitigate this threat require that social media platforms be able to accurately detect low-credibility accounts even before the content they share can be classified as misinformation. Here we present methods to infer account credibility from information diffusion patterns, in particular leveraging two networks: the reshare network, capturing an account’s trust in other accounts, and the bipartite account-source network, capturing an account’s trust in media sources. We extend network centrality measures and graph embedding techniques, systematically comparing these algorithms on data from diverse contexts and social media platforms. We demonstrate that both kinds of trust networks provide useful signals for estimating account credibility. Some of the proposed methods yield high accuracy, providing promising solutions to promote the dissemination of reliable information in online communities. Two kinds of homophily emerge from our results: accounts tend to have similar credibility if they reshare each other’s content or share content from similar sources. Our methodology invites further investigation into the relationship between accounts and news sources to better characterize misinformation spreaders. Read the full article at: epjdatascience.springeropen.com

|

Suggested by

Fil Menczer

|

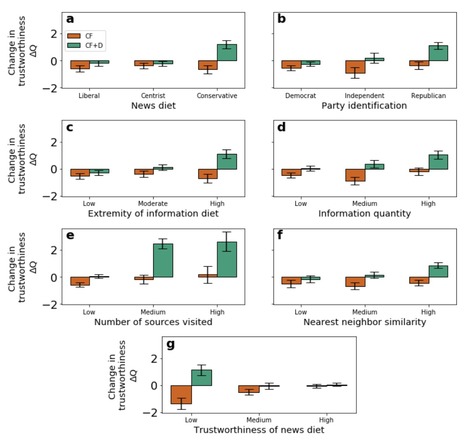

Saumya Bhadani, Shun Yamaya, Alessandro Flammini, Filippo Menczer, Giovanni Luca Ciampaglia & Brendan Nyhan

Nature Human Behaviour (2022) Newsfeed algorithms frequently amplify misinformation and other low-quality content. How can social media platforms more effectively promote reliable information? Existing approaches are difficult to scale and vulnerable to manipulation. In this paper, we propose using the political diversity of a website’s audience as a quality signal. Using news source reliability ratings from domain experts and web browsing data from a diverse sample of 6,890 US residents, we first show that websites with more extreme and less politically diverse audiences have lower journalistic standards. We then incorporate audience diversity into a standard collaborative filtering framework and show that our improved algorithm increases the trustworthiness of websites suggested to users—especially those who most frequently consume misinformation—while keeping recommendations relevant. These findings suggest that partisan audience diversity is a valuable signal of higher journalistic standards that should be incorporated into algorithmic ranking decisions. Read the full article at: www.nature.com

|

Suggested by

Fil Menczer

|

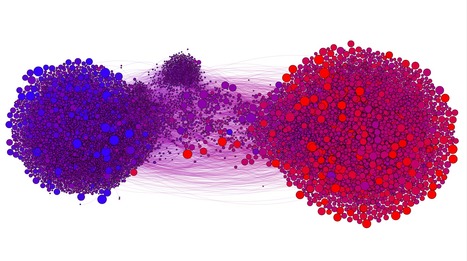

We analyze the relationship between partisanship, echo chambers, and vulnerability to online misinformation by studying news sharing behavior on Twitter. While our results confirm prior findings that online misinformation sharing is strongly correlated with right-leaning partisanship, we also uncover a similar, though weaker, trend among left-leaning users. Because of the correlation between a user’s partisanship and their position within a partisan echo chamber, these types of influence are confounded. To disentangle their effects, we performed a regression analysis and found that vulnerability to misinformation is most strongly influenced by partisanship for both left- and right-leaning users. Read the full article at: misinforeview.hks.harvard.edu

|

Suggested by

mohsen mosleh

|

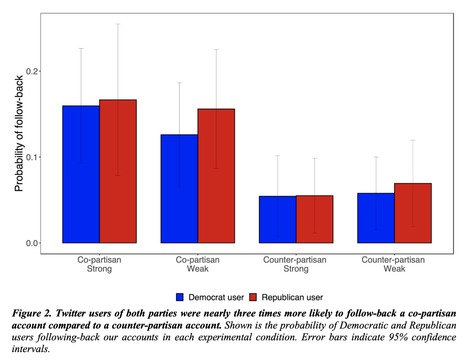

Mohsen Mosleh, Cameron Martel, Dean Eckles, David G. Rand Americans are much more likely to be socially connected to co-partisans, both in daily life and on social media. But this observation does not necessarily mean that shared partisanship per se drives social tie formation, because partisanship is confounded with many other factors. Here, we test the causal effect of shared partisanship on the formation of social ties in a field experiment on Twitter. We created bot accounts that self-identified as people who favored the Democratic or Republican party, and that varied in the strength of that identification. We then randomly assigned 842 Twitter users to be followed by one of our accounts. Users were roughly three times more likely to reciprocally follow-back bots whose partisanship matched their own, and this was true regardless of the bot’s strength of identification. Interestingly, there was no partisan asymmetry in this preferential follow-back behavior: Democrats and Republicans alike were much more likely to reciprocate follows from co-partisans. These results demonstrate a strong causal effect of shared partisanship on the formation of social ties in an ecologically valid field setting, and have important implications for political psychology, social media, and the politically polarized state of the American public.

|

|

Suggested by

Fil Menczer

|

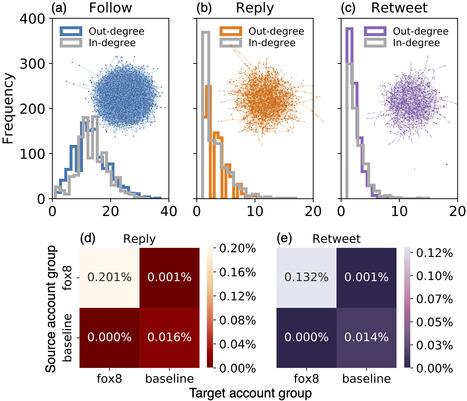

Large language models (LLMs) exhibit impressive capabilities in generating

realistic text across diverse subjects. Concerns have been raised that they

could be utilized to produce fake content with a deceptive intention, although

evidence thus far remains anecdotal. This paper presents a case study about a

Twitter botnet that appears to employ ChatGPT to generate human-like content.

Through heuristics, we identify 1,140 accounts and validate them via manual

annotation. These accounts form a dense cluster of fake personas that exhibit

similar behaviors, including posting machine-generated content and stolen

images, and engage with each other through replies and retweets.

ChatGPT-generated content promotes suspicious websites and spreads harmful

comments. While the accounts in the AI botnet can be detected through their

coordination patterns, current state-of-the-art LLM content classifiers fail to

discriminate between them and human accounts in the wild. These findings

highlight the threats posed by AI-enabled social bots. Read the full article at: arxiv.org

|

Suggested by

Fil Menczer

|

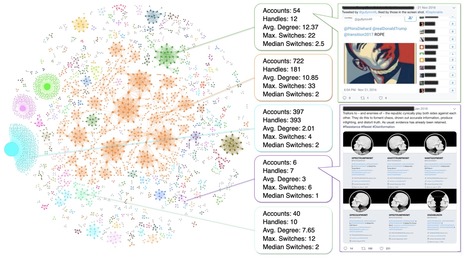

Coordinated campaigns are used to manipulate social media platforms and influence their users, a critical challenge to the free exchange of information. Our paper introduces a general, unsupervised, network-based methodology to uncover groups of accounts that are likely coordinated. The proposed method constructs coordination networks based on arbitrary behavioral traces shared among accounts. We present five case studies of influence campaigns, four of which in the diverse contexts of U.S. elections, Hong Kong protests, the Syrian civil war, and cryptocurrency manipulation. In each of these cases, we detect networks of coordinated Twitter accounts by examining their identities, images, hashtag sequences, retweets, or temporal patterns. The proposed approach proves to be broadly applicable to uncover different kinds of coordination across information warfare scenarios. By Diogo Pacheco, Pik-Mai Hui, Chris Torres, Bao Truong, Sandro Flammini & Fil Menczer Read the full open-access article from the Proceedings ICWSM2021

|

Suggested by

Fil Menczer

|

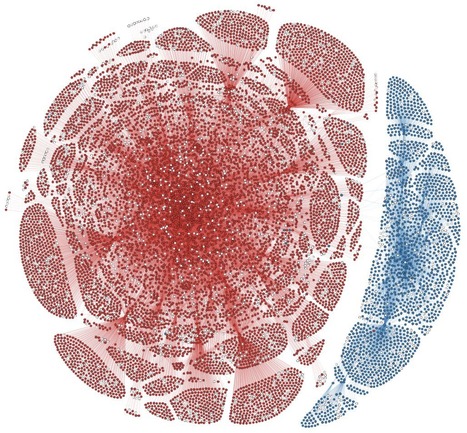

Christopher Torres-Lugo, Kai-Cheng Yang, Filippo Menczer A growing body of evidence points to critical vulnerabilities of social media, such as the emergence of partisan echo chambers and the viral spread of misinformation. We show that these vulnerabilities are amplified by abusive behaviors associated with so-called ''follow trains'' on Twitter, in which long lists of like-minded accounts are mentioned for others to follow. This leads to the formation of highly dense and hierarchical echo chambers. We present the first systematic analysis of U.S. political train networks, which involve many thousands of hyper-partisan accounts. These accounts engage in various suspicious behaviors, including some that violate platform policies: we find evidence of inauthentic automated accounts, artificial inflation of friends and followers, and abnormal content deletion. The networks are also responsible for amplifying toxic content from low-credibility and conspiratorial sources. Platforms may be reluctant to curb this kind of abuse for fear of being accused of political bias. As a result, the political echo chambers manufactured by follow trains grow denser and train accounts accumulate influence; even political leaders occasionally engage with them.

|

Suggested by

Fil Menczer

|

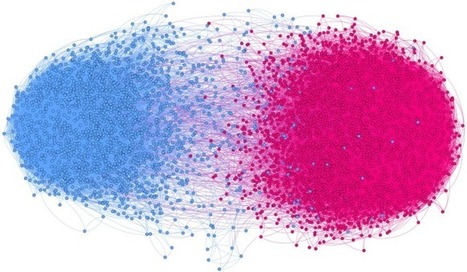

Kazutoshi Sasahara, Wen Chen, Hao Peng, Giovanni Luca Ciampaglia, Alessandro Flammini & Filippo Menczer

Journal of Computational Social Science (2020) While social media make it easy to connect with and access information from anyone, they also facilitate basic influence and unfriending mechanisms that may lead to segregated and polarized clusters known as “echo chambers.” Here we study the conditions in which such echo chambers emerge by introducing a simple model of information sharing in online social networks with the two ingredients of influence and unfriending. Users can change both their opinions and social connections based on the information to which they are exposed through sharing. The model dynamics show that even with minimal amounts of influence and unfriending, the social network rapidly devolves into segregated, homogeneous communities. These predictions are consistent with empirical data from Twitter. Although our findings suggest that echo chambers are somewhat inevitable given the mechanisms at play in online social media, they also provide insights into possible mitigation strategies.

|

Your new post is loading...

Your new post is loading...