Your new post is loading...

Your new post is loading...

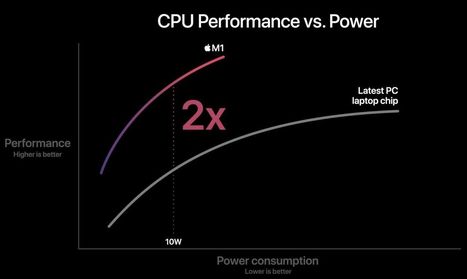

Apple emprunte à son tour la "Verticale du Fou" et abandonne Intel. 3 fois plus performant par Watt dissipé, le processeur "maison" #M1 — annoncé le 10 Novembre dernier — semble confirmer à quel point l'architecture #ARM a réussi à rattraper puis à dépasser l'architecture #X86 du géant de Santa Clara.

Les 16 milliards de transistors du M1 sont en effet gravés en 5 nanomètres, là où Intel ne parvient toujours pas à maîtriser ni 10 ni 7 nanos.

Un excellent article de Tom's #Hardware montre l'étendue de la menace qui pèse désormais sur Intel ; ce n'est pas tant la part de marché du Mac (à peine 9%) que celle de ses développeurs (30%) qui vont de plus en plus exclusivement basculer dans l'architecture ARM.

En s'emparant des activités de R&D dans le logiciel embarqué basées en France d'Intel, leader mondial des semi-conducteurs, la marque au Losange réalise le doublé. Le constructeur accélère clairement dans la voiture connectée, et redore son blason dans son pays d'origine où il vient sauver des emplois.

Could Samsung be the first big defection from ARM since the SoftBank takeover?

It was always thought that, when ARM relinquished its independence, its customers would look around for other alternatives.

The nice thing about RISC-V is that it’s independent, open source and royalty-free.

And RISC-V is what Samsung is reported to be using for an IoT CPU in preference to ARM.

Now SoftBank made a point of saying that its take-over of ARM was to get into IoT. If Samsung is now going to RISC-V for its IoT CPU, this affects the scale of Softbank’s aspirations and may persuade others to defect to RISC-V.

The Samsung RISC-V MCU is said to be aimed squarely at the ARM Cortex M0.

Nvidia and Qualcomm are already using RISC-V in the development of GPU memory controllers and IoT processors.

Although, as Intel found, it’s almost impossible to replace an incumbent processor architecture in a major product area, which means that ARM’s place as the incumbent architecture in cellphones is secure, at the moment there is no incumbent processor architecture in IoT or MCU – so these are up for grabs by any aspiring rival processor architecture.

the iPad Air 2 is powered by the A8X SoC — a chip with three billion transistors, and a tri-core CPU that gets uncomfortably close to laptop levels of performance, with a decent GPU to boot. .../... the A8X now has a Geekbench score that is very close to a dual-core Core i5-4250U — the Haswell chip that’s inside the mid-2013 13-inch MacBook Air. The A8X CPU manages single- and multi-threaded scores of 1812 and 4477 — while the Core i5-4250U is at 2281 and 4519

The Russian government has decided to domestically produce a computer chip which for use in government offices and state-run firms. The move is meant to elbow processors from the likes of AMD and Intel out of government use due to concerns about US spying and processor back doors. Electronics Weekly says Russian President Putin decided to push forward this processor development initiative. It follows a move, four years ago, when the Russian government said that all its computers would be moving to Linux. The Russian processor is currently referred to as the 'Baikal' microprocessor, named after most voluminous freshwater lakein the world. The chip is being designed by T-Platforms, a Russian supercomputer maker, alongside state defence corporation Rostec with co-financing from Russian state-run technology firm Rosnano. The Russian News Agency ITAR-TASS reports that there are going to be two initial Baikal chips; the Baikal M and the Baikal M/S. These chips will be designed upon the foundation provided by the ARM Cortex-A57 64-bit processor and be employed in personal computers and microservers.

Scientists have developed a technique to sabotage the cryptographic capabilities included in Intel's Ivy Bridge line of microprocessors. The technique works without being detected by built-in tests or physical inspection of the chip. The proof of concept comes eight years after the US Department of Defense voiced concern that integrated circuits used in crucial military systems might be altered in ways that covertly undermined their security or reliability. The report was the starting point for research into techniques for detecting so-called hardware trojans. But until now, there has been little study into just how feasible it would be to alter the design or manufacturing process of widely used chips to equip them with secret backdoors. In a recently published research paper, scientists devised two such backdoors they said adversaries could feasibly build into processors to surreptitiously bypass cryptographic protections provided by the computer running the chips. The paper is attracting interest following recent revelations the National Security Agency is exploiting weaknesses deliberately built-in to widely used cryptographic technologies so analysts can decode vast swaths of Internet traffic that otherwise would be unreadable.

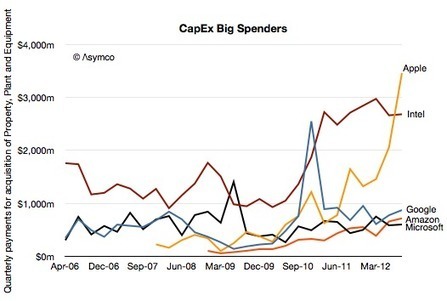

Apple's capital expenditures for the last year were $8.3 billion, which is significantly above its rivals, as this chart from Horace Dediu at Asymco shows.

Dediu believes Apple's capex is significantly above its peers because Apple is investing in data centers like Google, and process equipment like Intel. As a result, its quarterly spending is closer to Google plus Intel.

|

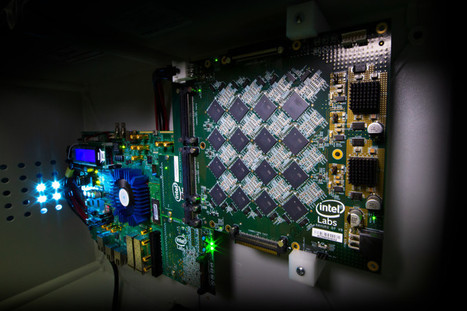

At DARPA's Electronics Resurgence Initiative 2019 in Michigan, Intel introduced a new neuromorphic computer capable of simulating 8 million neurons. Neuromorphic engineering, also known as neuromorphic computing, describes the use of systems containing electronic analog circuits to mimic neuro-biological architectures present in the nervous system. Scientists at MIT, Perdue, Stanford, IBM, HP, and elsewhere have pioneered pieces of full-stack systems, but arguably few have come closer than Intel when it comes to tackling one of the longstanding goals of neuromorphic research — a supercomputer a thousand times more powerful than any today. Case in point? Today during the Defense Advanced Research Projects Agency’s (DARPA) Electronics Resurgence Initiative 2019 summit in Detroit, Michigan, Intel unveiled a system codenamed “Pohoiki Beach,” a 64-chip computer capable of simulating 8 million neurons in total. Intel Labs managing director Rich Uhlig said Pohoiki Beach will be made available to 60 research partners to “advance the field” and scale up AI algorithms like spare coding and path planning. “We are impressed with the early results demonstrated as we scale Loihi to create more powerful neuromorphic systems. Pohoiki Beach will now be available to more than 60 ecosystem partners, who will use this specialized system to solve complex, compute-intensive problems,” said Uhlig. Pohoiki Beach packs 64 128-core, 14-nanometer Loihi neuromorphic chips, which were first detailed in October 2017 at the 2018 Neuro Inspired Computational Elements (NICE) workshop in Oregon. They have a 60-millimeter die size and contain over 2 billion transistors, 130,000 artificial neurons, and 130 million synapses, in addition to three managing Lakemont cores for task orchestration. Uniquely, Loihi features a programmable microcode learning engine for on-chip training of asynchronous spiking neural networks (SNNs) — AI models that incorporate time into their operating model, such that components of the model don’t process input data simultaneously. This will be used for the implementation of adaptive self-modifying, event-driven, and fine-grained parallel computations with high efficiency.

Intel inked a deal to acquire Mobileye, which the chipmaker’s chief Brian Krzanich said enables it to “accelerate the future of autonomous driving with improved performance in a cloud-to-car solution at a lower cost for automakers”. Mobileye offers technology covering computer vision and machine learning, data analysis, localisation and mapping for advanced driver assistance systems and autonomous driving. The deal is said to fit with Intel’s strategy to “invest in data-intensive market opportunities that build on the company’s strengths in computing and connectivity from the cloud, through the network, to the device”. A combined Intel and Mobileye automated driving unit will be based in Israel and headed by Amnon Shashua, co-founder, chairman and CTO of the acquired company. This, Intel said, “will support both companies’ existing production programmes and build upon relationships with car makers, suppliers and semiconductor partners to develop advanced driving assist, highly-autonomous and fully autonomous driving programmes”.

Japan’s SoftBank is buying U.K.-based chip design firm ARM Holdings for about $32 billion, according to the FT.

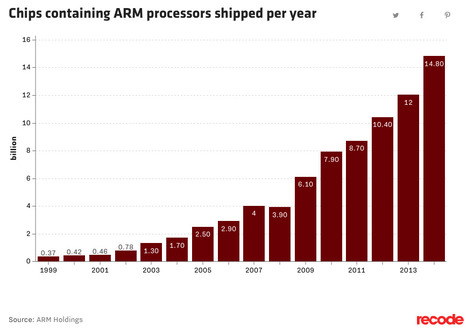

Why? Everything is a computer now, and ARM has been one of the winners of the mobile revolution.

ARM designs chips — but doesn’t actually make them — for a huge variety of devices. It dominates the market for smartphones — Apple is a big client, as is Samsung — and its chips shows up in other consumer gadgets, as well as more-industrial-like devices and “internet of things” sensors.

The number of chips containing ARM processors reached almost 15 billion in 2015, up from about 6 billion in 2010.

The move is a big one for SoftBank CEO Masa Son after his would-be successor, former Google executive Nikesh Arora, stepped away from the company last month. (Talks presumably started while Arora was still there.)

One key question is whether other firms will let SoftBank purchase ARM or if there will be a bidding war. Apple, arguably ARM’s most important client, and Intel, which lost the mobile chip war to ARM, are both potential buyers.

The offer is already a generous multiple. As the FT notes, it’s some 70 times ARM’s net income last year. That’s around the same price-to-earnings ratio as Facebook stock.

The summit will take place on Thursday, October 30th and Friday, October 31, 2014 at École Polytechnique in Paris, France and will feature Mark Shuttleworth along with speakers from Intel, Microsoft, Rackspace.

is Intel’s new CEO is rethinking the “x86 and only x86″ strategy? Last week, a specialty semiconductor company called Altera announced that Intel would fabricate some if its chips containing a 64-bit ARM processor. The company’s business consists of offering faster development times through “programmable logic” circuits. Instead of a “hard circuit” to be designed, manufactured, tested, debugged, modified and sent back to the manufacturing plant in lengthy and costly cycles, you buy a “soft circuit” from Altera and similar companies (Xilinx comes to mind). This more expensive device can be reprogrammed on the spot to assume a different function, or correct the logic in the previous iteration. Pay more and get functioning hardware sooner, without slow and costly turns through a manufacturing process. With this in mind, what Intel will someday manufacture for Altera isn’t the 64-bit ARM processor that excited some observers: “Intel Makes 14nm ARM for Altera“. The Stratix 10 circuits Altera contracts to Intel manufacturing are complicated and expensive ($500 and up) FPGA (Field Programmable Gate Array) devices where the embedded ARM processor plays a supporting, not central, role. This isn’t the $20-or-less price level arena in which Intel has so far declined to compete.

While Apple is now committed to Intel in computers and is unlikely to switch in the next few years, some engineers say a shift to its own designs is inevitable as the features of mobile devices and PCs become more similar, two people said. Any change would be a blow to Intel, the world’s largest processor maker, which has already been hurt by a stagnating market for computers running Microsoft Corp. (MSFT)’s Windows software and its failure to gain a foothold in mobile gadgets.

As handheld devices increasingly function like PCs, the engineers working on this project within Apple envision machines that use a common chip design. If Apple Chief Executive Officer Tim Cook wants to offer the consumer of 2017 and beyond a seamless experience on laptops, phones, tablets and televisions, it will be easier to build if all the devices have a consistent underlying chip architecture, according to one of the people.

|

Your new post is loading...

Your new post is loading...

Your new post is loading...

Your new post is loading...

L'intégration verticale est la tendance "Tech" du moment...